This is the second instalment of my series about developing a music VR a part of the Immersion Fellowship. The first goes over my initial background research, and how I came to the research question I wanted to explore through this project:

How can we design immersive musical experiences that make use of the gestural affordances of VR technology and support embodied interaction?

In this post, I’ll be discussing designing and developing the prototype, as well as some early sharing and testing.

Designing for Flow States

To help design for immersive interactive experiences, a useful concept is the idea of flow states, which describes an “optimal” state of being in which a person is completely absorbed in the activity or situation at hand. Allowing users to reach flow states is be important for promoting creativity, engagement and enjoyment in an experience.

Flow is a highly influential concept in Human-Computer Interaction (HCI) design, and has been studied in great depth within musical HCI. An important aspect of promoting flow states in music creation is facilitating rapid cycles of creating, auditioning and editing musical material. Basically, if you’re trying to get a musical idea down, you don’t want to have to deal delays like loading times, or having to navigate a series of sub-menus for a specific feature.

Design

I wanted to create an environment where users could create their own instruments, that provided a rapid interaction cycle of creating, editing, and playing. The idea I came up with was to have interactive strings that users could draw, manipulate and play on the fly.

An instrument that I was heavily inspired by was the Hyper Drumhead, which lets users draw, play and manipulate drum heads on an interactive 2D surface. I liked the idea of rapid cycles of creating an instrument, playing it and editing it. It successfully blends magical and natural interactions in a creative way, with magical interactions in the drawing of drums, and natural interactions being supported by striking the drum heads, which creates an expected drum-like sound. It also reflects a lot of the themes I’m interested in around end-users being in control of the relationships between gestures and sound.

I was also inspired the popular VR experience Tilt Brush, a 3D painting experience. I liked how gesturally focused the experience was, and I thought that being able to “paint” a musical instrument in a similar way would be an engaging interaction.

Some of my early designs for this focused on using a Leap Motion or other freehand interaction devices, but I decided to focus on the standard motion controllers of consumer VR hardware. This was in part due to future proofing: who knows if current compatibility between VR hardware and third party devices will continue in the long run; and to cast a wide a net as possible for potential end-users: VR is an already niche market, limiting your users to those who own a VR set as well as other sensor hardware would severely limit your user base. I have been developing the prototype for the Oculus Rift. Instead, I wanted to make the most out of the existing gestural affordances of proprietary VR hardware.

Drawing

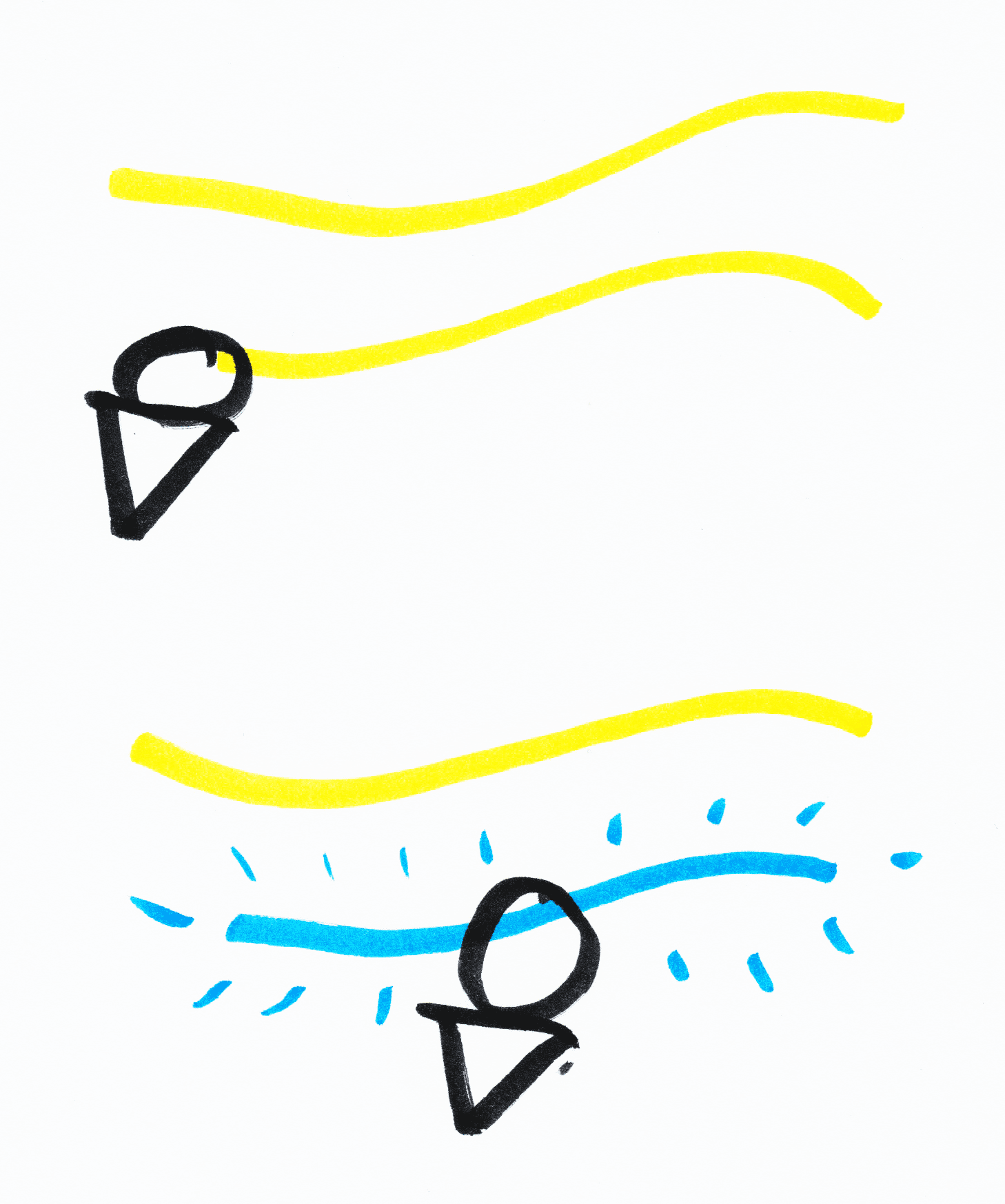

In this prototype, strings are drawn in the air, with the string taking the shape of the gesture performed by the user. I initially thought about having linear, straight strings being drawn between the points in space where the user began and finished drawing, and while this would have provided a more natural interaction for strings, mirroring the physics of strings needing to be pulled taught in traditional, physical instruments, I opted for the wiggly option, as this felt like an opportunity to include a “magical” interaction. The act of drawing strings in mid-air is itself a magical interaction not present in physical instruments, and having the string follow the user’s gesture would encourage them to perform interesting gestural shapes, as well as affording a magical interaction in the playing aspect: how does a twisted, curly string sound different to a straight one?

Strings can be drawn magically in mid-air and then plucked to trigger sound

Playing

Playing the strings felt like a more appropriate place to use natural interactions that mirror traditional instruments. This was in part to support the users understanding of how to play the strings, and to leverage the user’s existing experience with instruments. Currently, the strings are played by hitting the strings with a motion controller, which mirrors a “plucking” or “striking” instrumental metaphor. Natural mappings between a musician’s gestures and the sound are maintained: the length of the string determines the pitch of the note, and the strength of the strike determines the loudness; and the sound characteristics match a plucked or struck string instrument (short attack and long decay). As mentioned earlier, the “twistiness” of the string required a novel mapping choice, and is mapped to the tonality of the note, with the more twisted the string is, the more metallic the sound.

Strings with different “twistiness” could have different sound properties

Editing

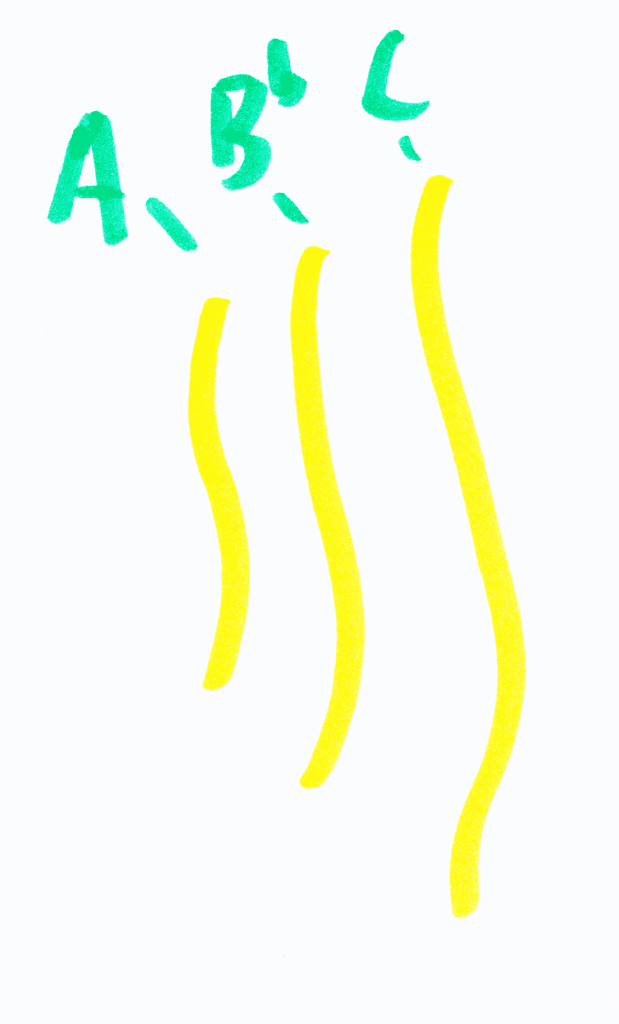

I thought that being able to attenuate and edit strings once created would be an important feature. The current implementation of editing is quite simple: users can delete strings, move strings around, or change the length, and thus the pitch, of the existing strings. The moving function, although simple, proved to be quite useful for ergonomic purposes, such as making arranging and strumming chords much easier.

The length of a string would determine the pitch, a “natural” interaction based on real-world sound properties

Development

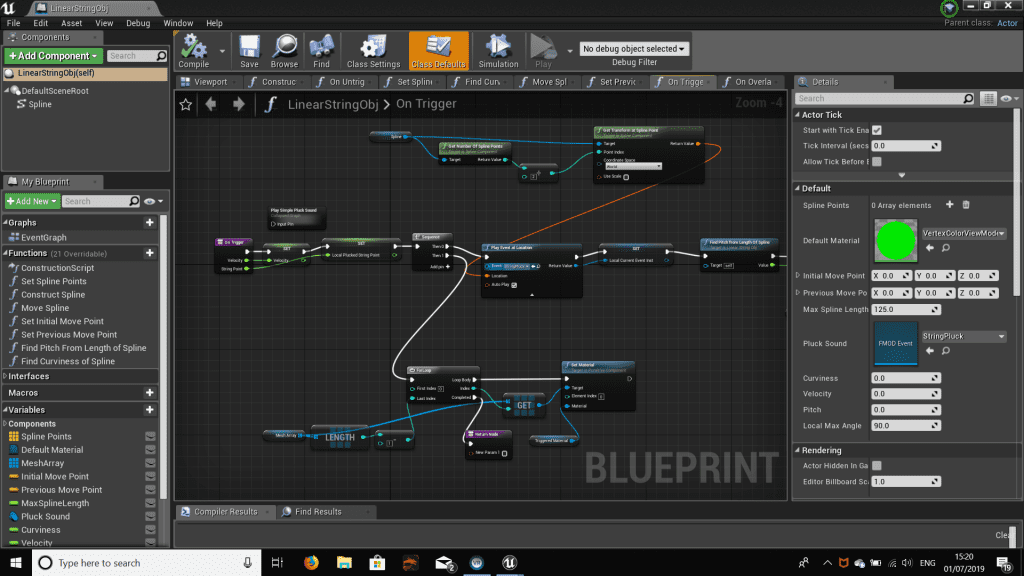

I decided to build the prototype in Unreal Engine 4. This was in part because it uses C++, which I have experience in, but mainly due to the engine’s Blueprints interface: a node-based visual programming tool that makes developing in the game engine more accessible, and is also useful for prototyping. For someone who is new to developing in game engines, the domain specific nature of Blueprints and the wealth of online tutorials and resources helped me to implement functionality and features quickly within established methods.

As the main aspect of this prototype is sound, I used a dedicated piece of audio middleware, FMOD, which gave me a detailed level of control over audio while still allowing me to rapidly prototype sound implementation.

The prototype being developed in Blueprints

At this stage of development, the prototype looked and behaved like this.

Initial user testing

With the draw, edit and play functionality described earlier fully implemented, I ran a small user test with ten participants. This was to get some initial qualitative insights into how effective the prototype is, and to work out what needed to be added next. Each participant spent around 10 minutes exploring the prototype. As part of the aim of this prototype is to provide an immersive experience,

Feedback from participants was about, well, feedback. Users were having issues drawing strings of the right length for the note they wanted, as there was no feedback as to what the note would be until they completed drawing it. This problem was mostly experienced by users with musical experience, as they had a specific idea in mind they were trying to implement in the environment. The users with less experience weren’t so bothered by this, as they were focusing on exploring and discovering interesting sounds as opposed to trying to play specific melodies.

Another thing that came up was that the participants were expecting to be able to trigger the strings with parts of their body such as their arms and head, highlighting the importance of the design principle represent a player’s body.

“This part of my arm was touching the line so why wasn’t the sound playing, its only my hand that has to move through the line. That felt a little disconnected.”

One thing that I observed from the initial user testing was how the users were using the strings to draw shapes, pictures, smiley faces, their names etc., and they were expecting their drawings to behave differently. In its current form, the interaction suggested to users that it was a visual drawing tool.

Learning from this, I realised that the prototype lacked constraints in the drawing process. By limiting, or constraining, what users can do within an interaction, you give users information about how the interface or experience behaves, and how their actions will affect the environment. By designing clear constraints, you can inspire creativity, as users use your constraints as building blocks for their ideas.

In summary, I needed to:

- Add a player avatar to represent a player’s body.

- Add constraints to help guide and inform users’ interactions.

- Add feedback about the musical properties of the string objects.

Sharing and Next steps

I shared the prototypes current progress with residents and members of the public at the Pervasive Media Studio, where I gave an afternoon talk. As well as being a great outlet for sharing, the insightful feedback, questions and discussion was helpful in helping me decide on the next steps for the prototype.

It was clear that the prototype was at a crossroads, with several potential directions. I needed to decide what exactly the application of the prototype was going to be: was it an entertainment experience for users who wanted to engage in casual play? Was it a tool for expert musicians? I would ideally like to support both experienced and novice music makers, following a mantra espoused by Tilt Brush’s designers: Support doodlers and artists alike. I decided my focus, for now at least, should be on facilitating casual musical play and exploration. However, I was conscious of avoiding creating something that was too simple, which could end up being boring after a few minutes of use.

In my next post, I’ll discuss how I took what I learnt from the user testing and sharing and integrated it into the next stage of development. In the meantime, if you want to get in touch find me on twitter @dombrown94.